The topic is related to the thread Two couplings to one interface (mixed dimensional coupling), but it will be a bit more extensive. I also drew nicer pictures this time!

Warning: This will be a long post, but with some pictures (I tried to stick with the preCICE color scheme ![]() ).

).

Short summary

I need to couple a quantity that exists on two parallel meshes on a third mesh. From investigations I see that is would be beneficial to map the quantities to the third mesh, sum them and then carry out the acceleration method on the sum. I am convinced that preCICE has all the capabilities for that implemented, but I am not sure whether I can access as a user or work around that.

When running the solver in serial I can add the quantity within the solver that has the two meshes. However, it become complicated for parallel simulations as I cannot expect all data that needs to be added to exist withing the same process.

Problem description

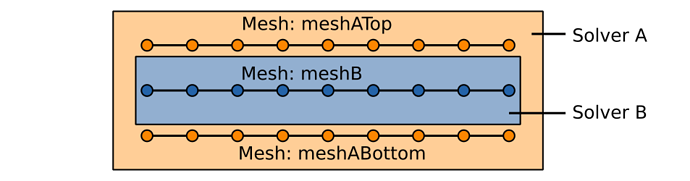

This is the current situation:

I have two solvers, Solver A and Solver B, where the domain of Solver A surrounds the domain of Solver B. Now I want to run a coupled situation where data between the meshes has to be exchanged.

The current coupling situation looks like this:

I have two solvers, Solver A and Solver B, where the domain of Solver A surrounds the domain of Solver B. Now I want to run a coupled situation where data between the meshes has to be exchanged.

The current coupling situation looks like this:

- Solver A computes a deformation of the top an the bottom (these are in general not the same). The deformations are communicated to Solver B.

- Solver B computes a pressure that is communicated to Solver A.

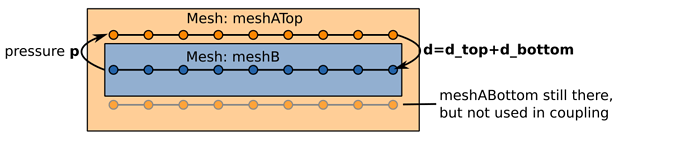

Solver B is not interested in the separate deformations d_top and d_bottom, but rather in its sum d_top+d_bottom. It also turns out that the coupled situation is more stable if we exchange d_top+d_bottom and run the acceleration on the combined quantity. So we figured out the current workaround that looks (roughly) like that:

We ignore the bottom mesh and compute d_top+d_bottom in Solver A and afterwards hand it over to preCICE. Data that needs to be available at meshABottom is copied there within Solver A if necessary.

This works fine as long as we do not run any of the participants parallel. If we run, for example, Solver A in parallel the following situation is very common:

The two threads of Solver A do not have matching parts of meshATop and meshABottom and therefore I cannot compute d_top+d_bottom locally in Solver A.

Solution ideas

The idea to solve it would be roughly the following:

- I handle the top and bottom mesh to different meshes with

d_topandd_bottomdefined on them. - Use the information preCICE has to map them onto one mesh. This could be

meshBor within Solver A. - Do the mathematical operation I want to do.

- Do the acceleration on the combined quantity

d_top+d_bottom

Implementing this directly into preCICE would be nice, and also one my agenda, but this needs some time and thought. At the moment need a quick solution.

Realization of solution

I am not sure if any of the ideas are feasible/legal in preCICE. Note that the code is currently using preCICE 1.6. This are the ideas that came up so far

Option 1: Map from Solver A to Solver A

I could make sure that each thread of Solver A has matching parts of meshATop and meshABottom defined. In the first step the solvers write the data they have to their meshes via preCICE. Afterwards they read from the local mesh using preCICE. All data should be available locally such that the computed d_top+d_bottom is written to Solver B and the acceleration shall be executed on d_top+d_bottom.

In order for the data to be mapped I guess I would have to introduce a fake time window and call advance twice.

Option 2: Map from Solver A to Solver B then send it back and forth

Solver A writes their local deformations d_top and d_bottom to Solver B via preCICE. No acceleration shall be used. Solver be gets matching pairs of d_top and d_bottom and computes d_top+d_bottom. Solver B sends d_top+d_bottom back to solver A. Solver A sends d_top+d_bottom back to solver B and acceleration is carried out on the combined quantity. Alternatively Solver B could send the combined quantity d_top+d_bottomto itself (if allowed)

In order for the data to be mapped I guess I would have to introduce a fake time window and call advance twice.

Option 3: Force matching partitioning

In case of a parallel simulation make sure that the domain of Solver A is always partitioned to contain matching parts of meshATop and meshABottom.

I am not sure if that is possible. It might be a hard restriction for possible partitions.

Option 4: Communicate data within Solver A without preCICE

This avoids a lot of problems with the coupling and is hard for me to implement. It feels also unnecessary since preCICE has all the information that would be needed to do that.

If anything is unclear, please let me know and I will draw some more figures and/or come up with more explanations. ![]()