Hello everyone!

1. Model description

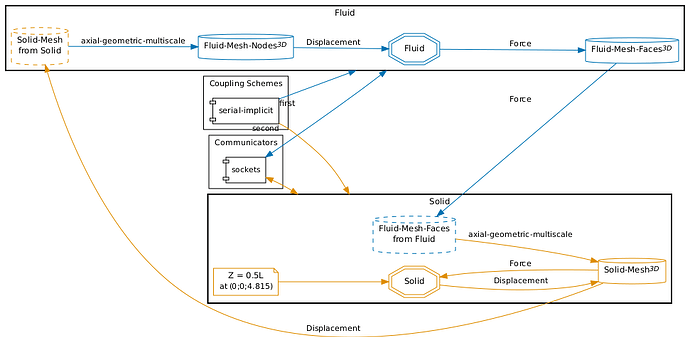

I am trying to simulate a classic fluid-structure interaction (FSI) problem, specifically the deformation of a flexible cylinder under incoming flow. I’m using OpenFOAM to solve the fluid domain and CalculiX to solve the structural domain. Given that my model has a high length-to-diameter ratio and the Reynolds number is quite high (requiring LES simulation), I’m attempting to reduce the computational load by using a 3D fluid mesh coupled with a 1D structural mesh. For the structure, the mesh consists of B32R beam elements.

2. Geometric-multiscale with parallel for OpenFOAM and single-core for CalculiX

In the process of configuring geometric-multiscale, I have tried both axial and radial mapping methods, setting up parallel pimpleFoam for OpenFOAM, but encountered the following error messages(upper one axial, lower one radial).

---[preciceAdapter] Loaded the OpenFOAM-preCICE adapter - v1.3.1.

---[preciceAdapter] Reading preciceDict...

---[precice] e[31mERROR: e[0m An unexpected exception occurred during configuration: Axial geometric multiscale mapping is not available for parallel participants..

---[preciceAdapter] Loaded the OpenFOAM-preCICE adapter - v1.3.1.

---[preciceAdapter] Reading preciceDict...

---[precice] e[31mERROR: e[0m An unexpected exception occurred during configuration: Radial geometric multiscale mapping is not available for parallel participants..

3. Geometric-multiscale with single-core for both OpenFOAM and CalculiX

Later, I tried running OpenFOAM and CalculiX using single-core computation, and the previous error did not occur again. However, the terminals of OpenFOAM and CalculiX encountered the following errors, respectively.

Invalid MIT-MAGIC-COOKIE-1 key#0 Foam::error::printStack(Foam::Ostream&) at ??:?

#1 Foam::sigSegv::sigHandler(int) at ??:?

#2 ? in /lib/x86_64-linux-gnu/libpthread.so.0

#3 ? in /lib/x86_64-linux-gnu/libprecice.so.3

#4 ? in /lib/x86_64-linux-gnu/libprecice.so.3

#5 ? in /lib/x86_64-linux-gnu/libprecice.so.3

#6 preciceAdapter::Adapter::initialize() at ??:?

#7 preciceAdapter::Adapter::configure() at ??:?

#8 Foam::functionObjects::preciceAdapterFunctionObject::read(Foam::dictionary const&) at ??:?

#9 Foam::functionObjects::preciceAdapterFunctionObject::preciceAdapterFunctionObject(Foam::word const&, Foam::Time const&, Foam::dictionary const&) at ??:?

#10 Foam::functionObject::adddictionaryConstructorToTable<Foam::functionObjects::preciceAdapterFunctionObject>::New(Foam::word const&, Foam::Time const&, Foam::dictionary const&) at ??:?

#11 Foam::functionObject::New(Foam::word const&, Foam::Time const&, Foam::dictionary const&) at ??:?

#12 Foam::functionObjectList::read() at ??:?

#13 Foam::Time::run() const at ??:?

#14 ? in ~/OpenFOAM/OpenFOAM-v2012/platforms/linux64GccDPInt32Opt/bin/pimpleFoam

#15 __libc_start_main in /lib/x86_64-linux-gnu/libc.so.6

#16 ? in ~/OpenFOAM/OpenFOAM-v2012/platforms/linux64GccDPInt32Opt/bin/pimpleFoam

Segmentation fault (core dumped)

Invalid MIT-MAGIC-COOKIE-1 keycorrupted double-linked list

[amax:1211213] *** Process received signal ***

[amax:1211213] Signal: Aborted (6)

[amax:1211213] Signal code: (-6)

[amax:1211213] [ 0] /lib/x86_64-linux-gnu/libpthread.so.0(+0x14420)[0x7f8601d87420]

[amax:1211213] [ 1] /lib/x86_64-linux-gnu/libc.so.6(gsignal+0xcb)[0x7f860177f00b]

[amax:1211213] [ 2] /lib/x86_64-linux-gnu/libc.so.6(abort+0x12b)[0x7f860175e859]

[amax:1211213] [ 3] /lib/x86_64-linux-gnu/libc.so.6(+0x8d26e)[0x7f86017c926e]

[amax:1211213] [ 4] /lib/x86_64-linux-gnu/libc.so.6(+0x952fc)[0x7f86017d12fc]

[amax:1211213] [ 5] /lib/x86_64-linux-gnu/libc.so.6(+0x9594c)[0x7f86017d194c]

[amax:1211213] [ 6] /lib/x86_64-linux-gnu/libc.so.6(+0x95a7c)[0x7f86017d1a7c]

[amax:1211213] [ 7] /lib/x86_64-linux-gnu/libc.so.6(+0x96fe0)[0x7f86017d2fe0]

[amax:1211213] [ 8] /lib/x86_64-linux-gnu/libprecice.so.3(+0x2ce22b)[0x7f86029fc22b]

[amax:1211213] [ 9] /lib/x86_64-linux-gnu/libprecice.so.3(+0x2ac48d)[0x7f86029da48d]

[amax:1211213] [10] /lib/x86_64-linux-gnu/libprecice.so.3(+0x260e98)[0x7f860298ee98]

[amax:1211213] [11] /lib/x86_64-linux-gnu/libprecice.so.3(+0x2d7338)[0x7f8602a05338]

[amax:1211213] [12] /lib/x86_64-linux-gnu/libprecice.so.3(+0x108d4f)[0x7f8602836d4f]

[amax:1211213] [13] /lib/x86_64-linux-gnu/libprecice.so.3(_ZN7precice11ParticipantD1Ev+0x249)[0x7f86028556c9]

[amax:1211213] [14] /lib/x86_64-linux-gnu/libprecice.so.3(+0xc2855)[0x7f86027f0855]

[amax:1211213] [15] /lib/x86_64-linux-gnu/libc.so.6(__cxa_finalize+0xce)[0x7f8601782fde]

[amax:1211213] [16] /lib/x86_64-linux-gnu/libprecice.so.3(+0x75077)[0x7f86027a3077]

[amax:1211213] *** End of error message ***

Aborted (core dumped)

4. Attatched files

The structure mesh, setting files for OpenFOAM and CalculiX, precice-config, the logs from parallel-implicit test and serial-implicit test are attached.

all.msh (55.8 KB)

config.yml (234 Bytes)

parallel-implicit-fluid.log (3.1 KB)

parallel-implicit-solid.log (8.1 KB)

precice-config.xml (3.4 KB)

riserVIV.inp (570 Bytes)

serial-implicit-fluid.log (3.1 KB)

serial-implicit-solid.log (7.7 KB)

controlDict.txt (1.5 KB)

preciceDict.txt (1.2 KB)

Could anyone advise on the possible reasons and how to properly set up the precice-config and the other files? Also, is this 3D-1D FSI coupling approach with OpenFOAM and CalculiX feasible?

Thank you in advance for any insights or assistance you may offer!